Seaways Free Article: The hidden engines of maritime failure

How small cognitive distortions escalate

by Andrew Baker AFNI

Maritime casualties are almost never the result of a single dramatic mistake. They emerge from an escalation pathway: a sequence in which small distortions in perception and judgement accumulate until the bridge team’s mental model no longer matches the real situation. By the time the mismatch becomes obvious, the vessel’s position, energy, or proximity to infrastructure may be beyond conventional recovery.

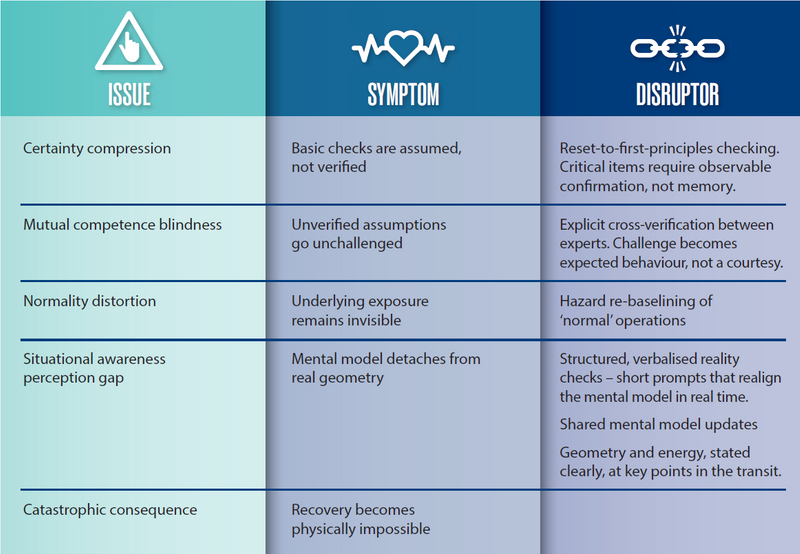

Across investigations, simulator work and pilotage reviews, a familiar pattern appears. Before any visible error, there is usually a cluster of subtle, repeatable cognitive drivers that erode awareness long before anyone acts in a way that looks obviously wrong. This article examines four of those drivers:

- Certainty compression: the outcome of basic checks is assumed, not verifi ed;

- Mutual competence blindness: when shared expertise suppresses scrutiny;

- Normality distortion: when long periods of success conceal exposure;

- Situational awareness perception gap: the team’s picture no longer matches the vessel’s actual state. Each of these can degrade performance on its own. Together, they create the conditions for highconsequence failure.

Getting away from blame

Informal discussions after incidents often include terms like ‘complacency’ and ‘incompetence’. These are simple labels, but strategically unhelpful. They carry moral judgement, provoke defensiveness, and imply personal shortcomings that professionals reject — often rightly. No pilot or organisation aspires to these labels, and the shame they trigger encourages psychological distancing: ‘That doesn’t apply to us.’

However, the behaviour that gets labelled as complacency or incompetence is almost never due to a defi cit of care or commitment. It is the outcome of the universal cognitive mechanisms listed above, which affect all humans, especially during familiar, high-frequency operations. People who believe they are immune have, more often than not, simply avoided the moment when these mechanisms intersect beyond the point of recovery.

If the industry wants to interrupt escalation pathways earlier, it must move beyond blame labels to structural acknowledgement of these drivers. They provide vocabulary for recurring patterns traditionally dismissed as simply ‘human error.’

Certainty compression

Certainty compression is the rapid stabilisation of a mental model under familiarity. The operator becomes so sure the fundamentals have been attended to that they no longer consciously verify them. Steps that were once deliberate turn into assumptions.

This mechanism is visible in everyday life. You might search every room for your sunglasses — checking tables, counters, even the car — becoming increasingly certain they must be lost. Only later do you discover the sunglasses were on your head all along. The mental model (‘the glasses aren’t on me’) hardens faster than the evidence justifies. Assumption replaces verification.

At sea, the same mechanism exists. Some of the ways in which it manifests include:

- Passage plans accepted on sight, despite evident errors;

- Partial equipment tests where missing steps go unnoticed;

- Procedures executed from memory rather than from documents;

- Assumed system configurations that remain unverified.

In every case, the operator genuinely believes the basics have been checked, and the feeling of certainty outweighs evidence. This is a particular danger with high-frequency tasks. The actions carried out become so familiar that the brain compresses them – effectively, it runs on automatic. Repetition creates vulnerability.

The Maersk Shekou incident shows how these cognitive patterns provide a useful lens for understanding operational sequences. On 30 August 2024, the vessel approached the Fremantle inner harbour wheel-over point at over 7 knots on 083°. The planned manoeuvre required a timely port turn initiated by a helm order. That helm order was never given. The helmsman, acting correctly on the last instruction, continued to maintain 083°. To the pilot, the turn existed mentally – he believed he had given the order.

To the helmsman, it did not exist at all. The most basic first principle in confinedwater manoeuvring — has the turn been ordered and started? — went unverified. This illustrates how certainty compression can manifest in practice: assumption standing in for confirmation. The defence is not telling people to do better, but designing out the points of failure:

- Checklists that force first-principles verification;

- Procedures that cannot be completed from memory;

- Explicit confirmation of geometry and energy before entering critical segments.

Mutual competence blindness

The paradox of high competence is simple: the stronger the mutual respect between experts, the more easily scrutiny collapses.

Mutual competence blindness is the tendency of professionals to reduce cross-checking precisely because they are dealing with other professionals. Challenge becomes informal, deferred, or softened. Weak signals vanish under assumptions of shared understanding.

Examples include:

- Masters deferring excessively to pilots;

- Pilots assuming ship staff have complied fully with SMS requirements;

- Bridge teams accepting degraded states without challenge.

The Maersk Shekou sequence also reflects the dynamics at play in mutual competence blindness.

As described above, the pilot believed he had issued the helm order, while the helmsman maintained 083° exactly as instructed. The supervising pilot queried ‘not turning?’ but did not verify whether the helm order had been issued, repeated, and executed. The simplest check — ‘Helm, what is your order?’ — never occurred. Shared expertise suppressed verification.

Mutual competence blindness erodes BRM just at the point where it should be strongest — between senior professionals operating together on the bridge. Countermeasures require:

- Explicit cross-verification roles;

- Structured challenge protocols;

- Clear accountability for confirming helm orders and configuration states.

When no incidents look like no risk

Normality distortion occurs when long periods of routine success are misinterpreted as evidence of inherent safety. The absence of incidents becomes ‘proof’ of a robust safety system, when it may simply reflect luck or untested assumptions. This distortion hides structural exposure — especially where vessel size, infrastructure limits, and traffic complexity have evolved far beyond original design parameters.

The 2024 incident in which the Maersk Dali lost electrical power and struck the Francis Scott Key Bridge, collapsing a major span, is a case in point. Vulnerability had been present for decades, through increasing ship size, inadequate fendering and reliance on shipboard reliability. Countless safe transits had normalised the exposure. When a rare but foreseeable failure finally occurred and the vessel lost power, there was no room for recovery.

Normality distortion thrives where consequence is high but frequency is low. Countermeasures include:

- Periodic reassessment of ‘routine’ operations;

- Treating absence of incidents as a trigger for re-evaluation, not validation that the principles are correct;

- Integrating infrastructure limits into risk assessments, not assuming they will be sufficient.

The final breakdown

When the previous three drivers occur at the same time, they create the conditions for a situational awareness perception gap: the moment when the team’s mental model detaches from reality. Symptoms include:

- Continuing as planned while energy or drift exceed perceived margins

- Acting on outdated cues

- Rejecting contradictory information

- Belief in control after control has degraded

By the time the gap is recognised, it may be too late for any possibility of recovery. Many casualties show intense corrective action in the fi nal minutes — but by then, the physics are uncompromising.

Closing the gap

Preventing this gap must be a deliberate design objective, not an assumed outcome of competence.

The fi rst step in improving these behaviours is recognising that they are universal. Certainty compression, mutual competence blindness, and normality distortion are not character fl aws. They are predictable human patterns that must be anticipated and managed. Only by accepting their presence can organisations design procedures, training, and governance that detect and counteract them before they create a situational awareness perception gap.

Across incidents, there is a consistent pathway that converts human tendencies into system-level vulnerability. Organisations need to put in place disruptors to block these paths.

Governance implications

The Dali and Maersk Shekou examples demonstrate a broader truth; experience alone is not a barrier strong enough to contain structural exposure.

Port authorities and regulators must assess infrastructure vulnerability proactively. Key questions include ‘where could normality distortion be masking exposure?’ and ‘which transits rely on assumed recovery margins near infrastructure?’

DPAs and company leaders must investigate cognitive patterns, not just procedural deviations, and evaluate safety based on detection of distortion, not absence of incidents. Key questions include:

- Where are we relying on memory in critical procedures?

- How explicit is cross-verifi cation between Master and pilot?

- How often do we reexamine hazards in ‘normal’ operations?

Expertise needs structural counterweights

The industry values competence. But the strengths of competence — familiarity, effi ciency, confi dence — carry cognitive liabilities that can quietly undermine safety.

High-reliability maritime operations are not defi ned by the absence of incidents. They are defi ned by how effectively they interrupt the gradual, invisible drift toward catastrophe.

That requires more than vigilance. It requires structural counterweights embedded in procedures, training, governance, and the physical design of operations that expose and disrupt these hidden engines of maritime failure before they do their worst.

Andrew Baker is a marine pilot working in Whangãrei Harbour, New Zealand